Federal banking agencies trying to ensure AI, ML benefit most rather than the few

Measuring risks and setting benchmarks for emerging fintech is top of mind for agencies such as the National Institute of Standards and Technology and the Comme...

As artificial intelligence and machine learning deploy across financial sectors, federal government needs a way to ensure standards for stability and inclusion are followed. Measuring risks and setting benchmarks for emerging fintech is top of mind for agencies such as the National Institute of Standards and Technology and the Commerce Department.

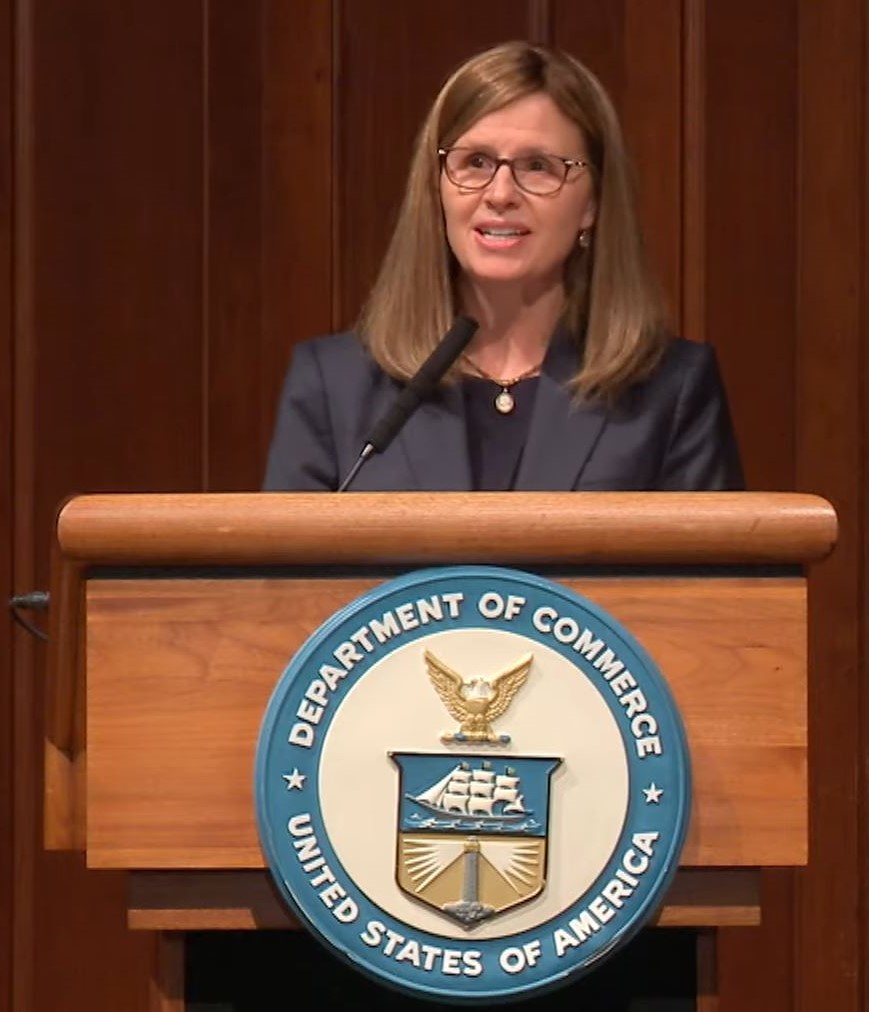

In her first public engagement since being sworn in earlier this month, NIST Director Laurie Locascio told an audience at Stanford University on Wednesday that the president’s 2023 budget request calls for an additional $80 million to expand and strengthen NIST capabilities for targeting critical and emerging technologies. Listing ways the agency is trying to enable trustworthy AI, she said NIST scientists and engineers are developing taxonomies, terminology and testbeds for measuring AI risks.

“NIST is developing a resource center of documents, software and standards and related tools that continue to better understanding and better identification of measurement, and management of various risks associated with AI systems,” she said during the Artificial Intelligence and the Economy Conference.

As a federal AI standards coordinator, NIST is also writing benchmarks, qualitative and quantitative metrics with which to evaluate AI technologies, and the agency is developing tasks, challenge problems and testbeds software tools, among other efforts. Locascio also noted that in March, NIST released for public comment the first draft of an AI risk management framework (RMF).

“The AI RMF adopts a rights preserving approach to AI, putting the protection of the individual rights at the forefront of AI development and use,” she said. “The AI RMF framework outlines a process to address traditional technical measures of accuracy, robustness and reliability, but it also acknowledges the sociotechnical characteristics of the system – characteristics such as privacy, interpretability, safety and bias, which are inextricably lot tied to human and social behavior.”

Protecting human rights and promoting inclusivity in AI and ML technology were central themes for Wednesday’s conference, which was co-hosted by NIST, the Commerce Department, the Stanford Institute for Human-Centered Artificial Intelligence and FinRegLab, a nonprofit technology tester for the financial sector. AI, ML and blockchain are emerging technologies worth keeping an eye on, said Michael Hsu, acting comptroller of the Currency and the administrator of the federal banking system.

Specifically talking about stablecoins, which the federal government is moving to regulate, Hsu said they enable the crypto economy to work. The President’s Working Group on Financial Markets at the Treasury Department, along with Hsu’s office, released a report in November saying stablecoins could be more widely used for payments in the future, both by households and by businesses.

As acting comptroller of the Currency, Hsu is also a director of the Federal Deposit Insurance Corporation. He explained that while a dollar in someone’s wallet is the liability of the Federal Reserve, the dollar in someone’s bank account is the liability of that bank, yet it is still treated as fully fungible. The same cannot be said for stablecoins, however, and those are meant to hold a steady value tied to the dollar.

“And surprise, surprise, actually — latest surveys show that almost one in five Americans have some kind of crypto exposure. And that population is disproportionately young, diverse and underbanked,” Hsu said. “If we want to protect people, we need to ensure that stable coins are stable.”

Hsu said that while financial inclusion is a common refrain in the crypto economy, he has yet to see a plan not based on a market cap that yields an exceptionally high return for a venture capitalist. Therefore, embedding ethics, norms and regulation in the development of crypto banking is important now.

To this point, his fellow panelist Susan Athey, an economics of technology professor and associate director of the Stanford Institute for HAI, said technological innovations that make the banking system more efficient or beneficial for more people, must be incentivized. The current system of user fees and costs does work for intermediaries, but it is not sustainable for the average customer.

“I don’t think it’s an acceptable outcome. We have to find a way to get people financial services and services in general. We just can’t waste 20% of remittance money, we can’t waste people with very little money, $20 overdraft fee after $20 overdraft fee — that’s just not an acceptable scenario,” Athey said. “So we have to start from the perspective that we’re going to solve that, and then make it safe.”

In that area at least, Commerce Deputy Secretary Don Graves told the panelists that his department and NIST could develop benchmarks for emerging AI. He asked Hsu if AI could reduce system costs to people who are low income or may live in another country. Hsu responded by equating it to the development of the internet, which was established via a series of decisions by government and academia about its openness and free access. Standards-setting bodies emerged organically, including the Internet Engineering Task Force and the World Wide Web Consortium.

“Today, we don’t have that. Today, it is all corporate driven. That’s not a bad thing, necessarily, but to the extent that we want standards for the people, I think it’s helpful to look back at that experience and say, well – does that provide some lessons for us as we think this through,” he said. “And I think NIST and Commerce is very well positioned. They’ve got technical expertise, because it’s very technical stuff, you can’t just be … someone like me who’s slightly better-than-average informed. You need more than that, right, to be able to engage in that discussion and get to those outcomes.”

It takes a leader with a public interest mindset, he said.

Copyright © 2024 Federal News Network. All rights reserved. This website is not intended for users located within the European Economic Area.

Amelia Brust is a digital editor at Federal News Network.

Follow @abrustWFED